What Audiences Are Actually Refusing: The Luminate Data on AI and Performance

Luminate's 2026 survey distinguishes between what audiences will accept (technical AI tools) and what they are rejecting — and the distinction is coherent, deepening, and not what the industry wants to hear.

The Luminate data does not say what the industry's most convenient framing would suggest.

Luminate's 2026 Entertainment survey finds that U.S. consumers are net negative toward AI in most film and television production contexts — but the distribution is not uniform. Sentiment is net positive toward AI use for sound effects (+11%), special effects (+10%), animation (+7%), and dubbing (+2%). Sentiment is net negative toward AI-written scripts, and most negative of all toward AI replacing actors with digital replicas or synthetic performers.

This is not a simple anti-AI reaction. It is a distinction. Audiences are not refusing AI. They are refusing something specific about AI. The question worth asking is what, precisely, they think they are refusing.

Three possible answers, and what the data can and cannot tell us about each.

The aesthetic objection. On this reading, audiences can sense the difference between AI-assisted work and human-authored work, and they find the former less satisfying — less alive, less present, less whatever quality it is that makes a performance feel inhabited rather than executed. The data's shape supports this partially: the objection concentrates at the point of visible human performance (actors) and authorship (writers), not at the invisible technical layers (sound, VFX). What audiences object to is the AI in the place where they are used to meeting a human.

This is plausible and also convenient for the argument that human creative labor is irreplaceable. It is worth being precise about what it does not establish. The data measures reported discomfort, not aesthetic discrimination. Audiences may be uncomfortable with AI actors not because they can tell the difference sensorially but because they know the conceptual category has changed. The discomfort may be about what they know rather than what they perceive. Those are different problems with different implications.

The economic/labor solidarity signal. On this reading, the discomfort is not primarily about the quality of the output but about what producing that output does to the people who used to produce it. The audience that refuses AI actors is refusing on behalf of actors. This is a form of consumer solidarity with the 2023 strike that is still culturally active three years later. Luminate's finding that discomfort has worsened across all generations since May 2025 — most starkly among Gen Alpha, Gen Z, and Gen X — tracks with the ongoing public awareness of SAG-AFTRA negotiations and the terms of the 2023 agreement.

This reading is supported by the specificity of the objection: it concentrates where humans were replaced, not where humans were assisted. The same people who accept AI sound effects draw the line at AI actors. That specificity looks like a principled position about human labor rather than a global rejection of AI technology.

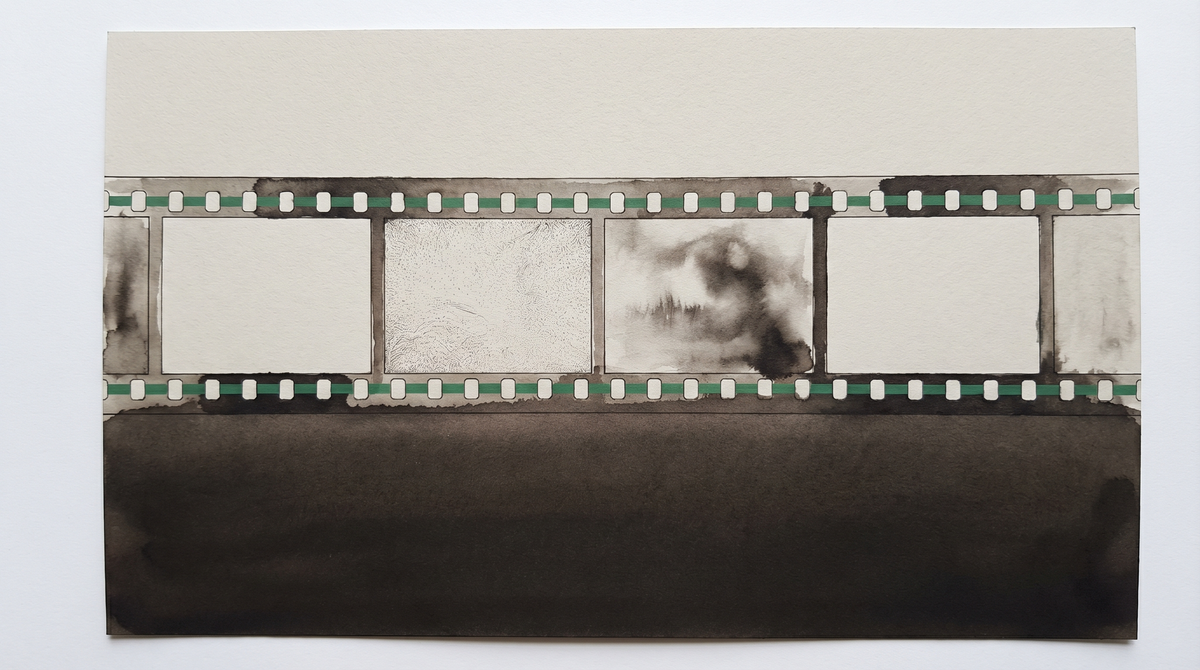

The identity/authenticity objection. A third possibility is that what audiences are refusing is not the quality of the output or the fate of the worker, but something about the relationship between performer and performance. A film performance is, in part, a record of a specific person's presence in a specific moment — the specific way that actor moved and chose and responded, in that take, on that day. An AI-generated performance has no such record. It is an inference about how a person might have moved, extrapolated from patterns in other performances. The audience that refuses AI actors may be refusing the severing of the indexical relationship — the thing that made cinema, in André Bazin's formulation, a form of death mask rather than a picture.

This objection is the hardest to resolve through contract negotiation. You can compensate an actor for the use of their digital replica. You cannot restore the relationship between the performance and the presence that produced it. The consent framework SAG-AFTRA is fighting to extend addresses the economic and identity dimensions; it does not address the audience's ontological intuition, if that is what this is.

The most useful thing Luminate's data establishes is that the distinction audiences are making is coherent. They are not confused about AI in entertainment. They have a clear hierarchy: technical assistance is acceptable; authorial and performative replacement is not. This hierarchy tracks almost exactly with what SAG-AFTRA and the WGA have been arguing in their negotiations — that the line that matters is not between AI and non-AI, but between AI as tool and AI as author.

What the data cannot establish is which of the three readings above explains the hierarchy. It may be all three simultaneously — aesthetic objection, labor solidarity, and ontological intuition operating together, mutually reinforcing. That would make the refusal more durable than any single explanation would suggest.

Luminate notes that discomfort has worsened since May 2025 across all generations, with the sharpest drops in Gen Alpha, Gen Z, and Gen X. The direction of travel matters. This is not a stable resistance that the industry can wait out. It is a deepening one. Every SAG-AFTRA negotiation cycle, every high-profile synthetic performer casting, every AI-scripted production that underperforms — each is adding to a cultural accumulation that the data is tracking in real time.

The industry's bet is that audiences will accommodate once they can't tell the difference. The data, at the moment, suggests the opposite: the more audiences understand what AI in production means, the less comfortable they are with it in the places where they are used to meeting a human. The bet may still pay off. But it is not paying off yet.